Do you find testing especially testing?

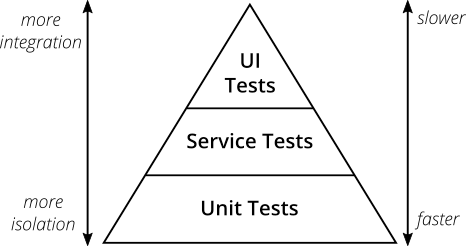

I’ve always been taught to use the Testing Pyramid1.

The basic idea is to write the most unit tests, as they are the smallest in scope and fastest to run. Then you write fewer integration tests, which are larger in scope and slower to run, before you finally write an even smaller number of end-to-end tests, which are the largest in scope and slowest to run.

I’ve always been taught to use Test Driven Development23 (TDD).

The basic idea of this is that you should write your tests before you write any code. Your tests codify the required behaviours of the software you then write, giving you an easy way to know when the feature you’re working on is complete. This should also document the context of the code you’ve written, making future changes theoretically easier.

But I keep observing common issues with unit testing on the digital delivery teams I’ve worked with over the past several years.

Recognise either of these from projects you’ve worked on?

Problem 1: Lack of test confidence – Your application fails when you deploy it, despite all the unit tests you’ve written for the change you’re making and high test coverage.

Problem 2: Testing stalls change – You make a simple change to your code, but you then need to totally restructure lots of unit tests in order to do it.

Problem 3: High cognitive load – You want to understand the behaviour of a section of your codebase, but reading the unit tests provides little help.

I’ve observed these problems dozens of times and they’ve always frustrated me because they seem to directly oppose the entire point of testing!

I believe good testing provides several significant benefits in software delivery:

- Good tests should give us confidence in the correctness of our changes

- Good tests should allow us to make changes more quickly

- Good tests should document how our system behaves

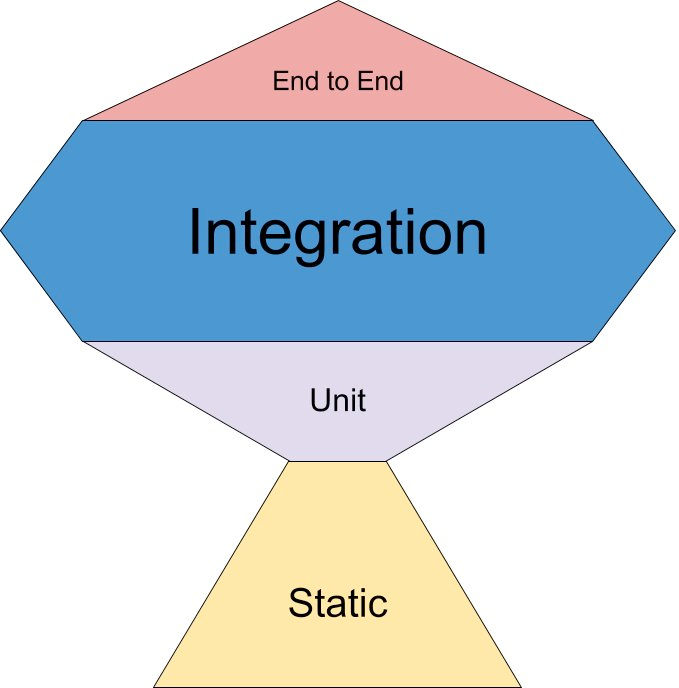

Introducing the Testing Trophy

The culprit is the Testing Pyramid. It’s outdated and obsolete.

I’d like to introduce you to the Testing Trophy456 as a replacement. This is also sometimes known as the Testing Vase or Testing Honeycomb, but it’s essentially the same thing.

Integration tests are now the widest layer

The goal of these tests is to codify business logic. This typically means each test maps to either an individual acceptance criterion or an obvious, easy-to-understand part of a user journey.

For example, if your user journey is “to allow users to log in and check their health records”, you might have an integration test to check they can log in, another to check that a logged-in user can access their health records, and a third to check that a person’s health record displays the correct information.

Integration tests shouldn’t really care about the internals of your code. In theory, if you re-wrote your codebase in a new language, you should still be able to run the existing integration tests with minimal change needed.

Integration testing also avoids some of the common problems attributed to end-to-end testing – extremely long, brittle tests which fail intermittently. By splitting these long journeys up, integration tests are more reliable.

To address the three problems described above (lack of test confidence, testing stalls, and high cognitive load), I recommend migrating the bulk of your unit tests to integration tests.

End-to-end tests stay very thin

The goal of end-to-end testing is to ensure your application works as expected in an environment that closely replicates the production environment. In other words, they’re infrastructure tests. Only a few of these tests are needed, and they should cover complete user journeys.

To keep your end-to-end testbench manageable, it needs to rely on the presence of your integration testbench. This means you can keep the number of expensive end-to-end tests to a minimum.

Unit testing is now also a very thin layer

There are two goals for unit testing now: exhaustive testing and complex-subsystem testing. Both of these rely on your integration testbench to assert that your codebase actually works in a general sense, and only test very specific functionality that would otherwise slip through the cracks.

Exhaustive testing is for when you want to test all possible values or combinations within a feature. You could integration test the “common” combinations to check that the code works overall, but you could then add a unit test that would loop through every possible single value or combination of values to ensure they all still work.

For example, imagine you’re building an online shop, and you have integration tests that verify that users can successfully purchase green paint. Exhaustive unit tests could build on this to ensure the paint is also available in the 100s of other colours your shop offers.

Complex-subsystem testing is for when you have an isolated nugget of complexity in your codebase that you want to test in isolation. Because it has many edge cases and is complex, highly focused unit testing is important to ensure it works correctly. In essence, this pattern uses the same philosophy as integration testing our services as opaque boxes – just zoomed in to treat a specific class, module, or function as an opaque box instead.

For example, I once wrote a set of complex-subsystem unit tests for a smart rate limiter I was working on. You could integration test that the rate limiter works for several examples in the wider context of the application it lived within, but then add unit tests on top of this for every edge case you can think of for just the rate limiting logic.

Linting/Static analysis is the new base layer of the model

The goal of this layer is simply to speed up our ability to write quality code and tests. By using automated tools, we can catch more careless errors without having to test for them specifically.

And that’s the Testing Trophy!

What does this look like in practice though?

But what about the real world? It’s all well and good to evangelise a theoretical testing model, but how would you go about applying this to a real project you’re working on?

Below are some examples of common queries, and how I’d approach addressing them.

“I’m on a project with an OO codebase and lots of unit tests for each class. How can I introduce integration testing?”

- You can probably use your existing test runner (jest, junit, pytest, etc.) and extend it with tools like Localstack, Wiremock, or Test Containers.

- You can then write integration tests which read like chunks of a user journey or individual acceptance criteria. Something like “I want to allow users with an existing account to log in” or “I want to reject users who log in with the wrong password”. This is often referred to as Behavior Driven Development (BDD).

- You can convince your team with working code examples to back up what you’re saying. Hopefully, it shouldn’t take them long to see that writing a handful of integration tests with very few mocks saves them considerable time and effort!

“I’m working on a frontend using a modern JS framework. What does the testing trophy even look like for this codebase?”

- Render the entire app (and spin up any backend services) and use tools like Cypress or Playwright to end-to-end test full user journeys (e.g., logging in, adding a product to the basket, checking out, and requesting a refund).

- Render each page and click through multiple components to integration test. These tests should naturally make sense as parts of a user journey (i.e, a login flow).

- Render each significant visual component and verify that it renders correctly on screen during unit testing (exhaustive unit testing). You can also use tools such as Storybook or Chromatic to help with this.

- Render each complex (think lots of gnarly state logic, etc.) component and run through edge cases (complex subsystem unit testing).

“Our team has an end-to-end testbench which has all of our user stories in but is flakey, expensive to run, and

breaks all the time. How do we fix this?”

- Is your entire end-to-end test stack running in a production-like environment? If not, fix this first! Even if it means you’re no longer able to run these tests locally, that’s an acceptable tradeoff.

- Are your tests actually written as full user journeys? Or do they try to do much more, testing edge cases or individual chunks of a user journey? If the test isn’t a full user journey through your system (e.g, I want to buy new shoes → my new shoes are on my feet), it probably belongs as an integration test.

“What’s the problem with just having lots of integration and lots of unit tests?”

- It’s fine to have partially overlapping tests! This is often a good pattern: an exhaustive unit test (the login button renders), an integration test (I can log in), and a larger e2e test (I can log in, buy something, and pay for it).

- If your integration tests are easy to run, can be run locally and debugged, having equivalent unit tests that try to do exactly the same thing is unnecessary, increases cognitive load, and runs the risk of rotting over time.

“My manager/boss says that we should aim for X% code coverage”

- Code coverage has diminishing returns (beyond 70%). It’s often good at revealing which chunks of a codebase haven’t been tested, but it’s bad at revealing which lines of code you should/shouldn’t be hitting7.

- If their focus is on overall system performance, you could consider trialling DORA metrics within your team or organisation instead8.

- They might start to trust you more when your new testbench starts automatically catching issues that the existing unit tests miss. I added a simple end-to-end testbench to a service our team owned, which had previously been very heavily unit-tested. It caught 5 bugs in the first month that would’ve otherwise gone to production without being spotted by the existing testbench.

“Our team has dedicated testers/SDETs, I don’t want to upset them by changing how we test”

- Most organisations employ testers to be test experts, not human computers. Most organisations and testers welcome automation and the increased confidence it brings through better testing.

- Bring them into the conversation early if you can. They’re likely to be highly in favour of new testbenches and better automation! After all, these tools make their jobs easier, freeing them up to focus on more important work like paying down test technical debt or activities like user acceptance testing (putting new stuff in front of actual users).

Annex – The types of tests

I want to take the time to define exactly what I mean when referring to all the different types of tests. In my experience, everyone has a subtly different definition of them – so for the sake of my sanity and your understanding, here are the definitions I’m using910:

End-To-End (E2E) testing

Sometimes referred to as Functional, Integrated, System, or Smoke testing.

End-to-end tests are typically scoped to Level 1 (Software System) of the C4 model. Of all the tests, end-to-end tests are as realistic as you’re able to get and often need to be run in a production-like environment. These tests typically read like fully complete user-journeys.

External software systems are mocked as needed and pragmatic infrastructure changes are made (for example, running with fewer replicas than the production instance to save on server costs).

End-to-end tests are meant to be wide-reaching and realistic, at the expense of speed and ease of being run.

Integration testing

Sometimes referred to as Functional, Component or Service testing.

Integration tests are typically scoped to Level 2 (Containers) of the C4 model. Integration tests are the middle child between end-to-end and unit tests, carefully balancing scope, speed, ease of running, and realism. These tests typically read like chunks of a user-journey, or specific acceptance criteria.

Other major components of your software system are mocked out, and tightly-coupled parts aren’t. For example, integration tests for a login API might mock everything except the API, a database storing login credentials, and a queue which login requests are read from. Infrastructure is also often mocked, with tools such as docker, localstack, or lightweight kubernetes clusters being popular choices to only stand up a small handful of tightly-coupled services.

Integration tests are a balance between being closer to reality, treating your software as an opaque box unlike unit testing; and fast and simple to write/run, unlike slower and more brittle end-to-end tests.

Unit testing

Unit tests don’t really go by any other names, but there are a million competing definitions of what constitutes a “unit” of software!

Unit tests are typically scoped to either Level 3 (Components) or Level 4 (Code) of the C4 model. Due to the limited scope of these tests, other classes/modules/functions/objects within the same codebase are mocked as needed.

Unit tests are meant to be quick to write and run, at the expense of scope and realism.

Static analysis

Sometimes also known as linting. Static analysis covers a vast array of tools and automations and can often be found in places like pre-commit hooks, continuous integration pipelines, and even your code editor. This bundles together linters, formatters, type checkers,

Static analysis tools are typically scoped to Level 4 (Code) of the C4 model. They aren’t really “tests” in the traditional sense, but enforce things like code styling and good practices.

Ideally, they should be run as close to code being written as possible for the shortest feedback loop possible.

- Mark Cohn – Succeeding with Agile: Software Development Using Scrum, 2009 ↩︎

- https://martinfowler.com/bliki/TestDrivenDevelopment.html ↩︎

- https://www.madetech.com/blog/messy-software-projects/ ↩︎

- https://www.wiremock.io/post/rethinking-the-testing-pyramid ↩︎

- https://kentcdodds.com/blog/the-testing-trophy-and-testing-classifications ↩︎

- https://kentcdodds.com/blog/write-tests ↩︎

- https://kentcdodds.com/blog/write-tests#not-too-many ↩︎

- https://www.thoughtworks.com/en-gb/insights/articles/improving-your-bottom-line-with-four-key-metrics ↩︎

- https://martinfowler.com/bliki/UnitTest.html#SolitaryOrSociable ↩︎

- https://martinfowler.com/articles/practical-test-pyramid.html ↩︎